Unsupervised Learning for Program Repair and Grammatical Error Correction

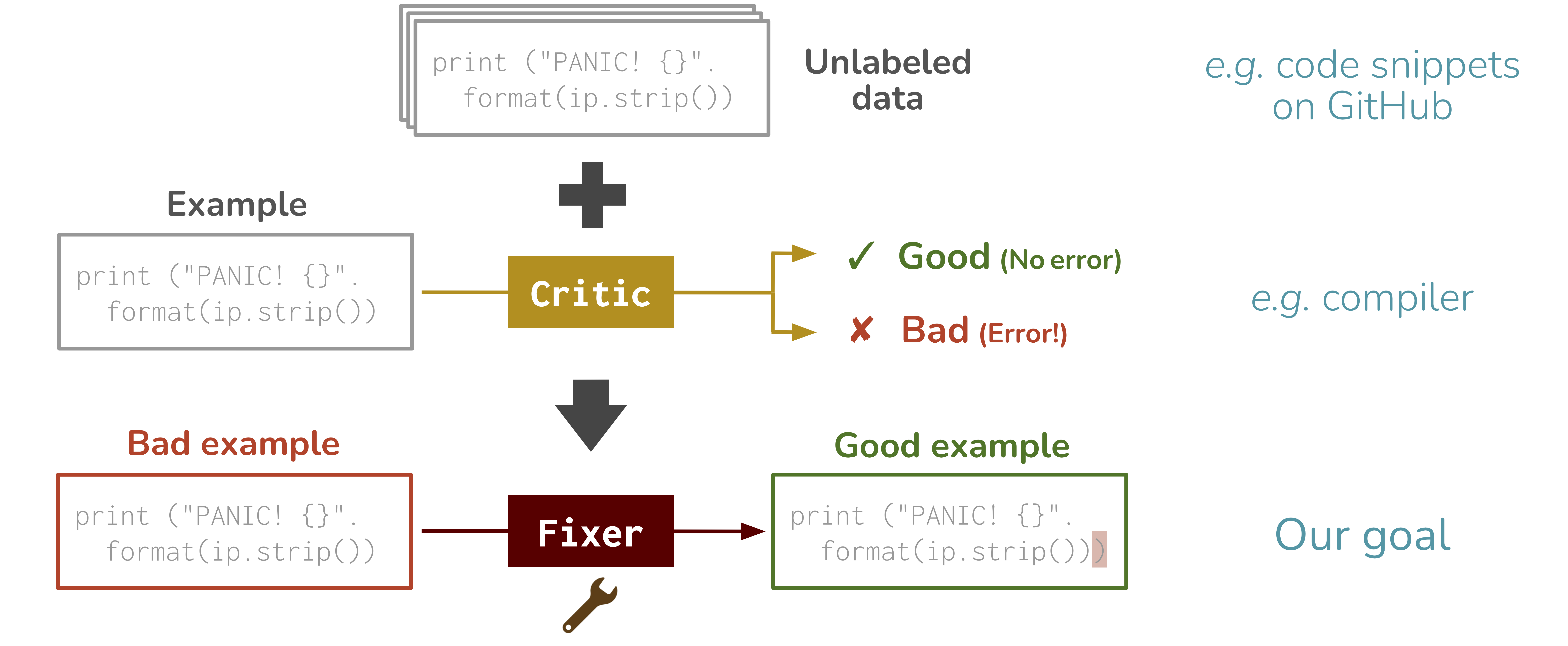

Break-It-Fix-It (BIFI) is an unsupervised method to train a model for fixing source code errors, purely from unlabeled data (raw code snippets) and a critic (a tool that checks the validity of code, such as compiler).

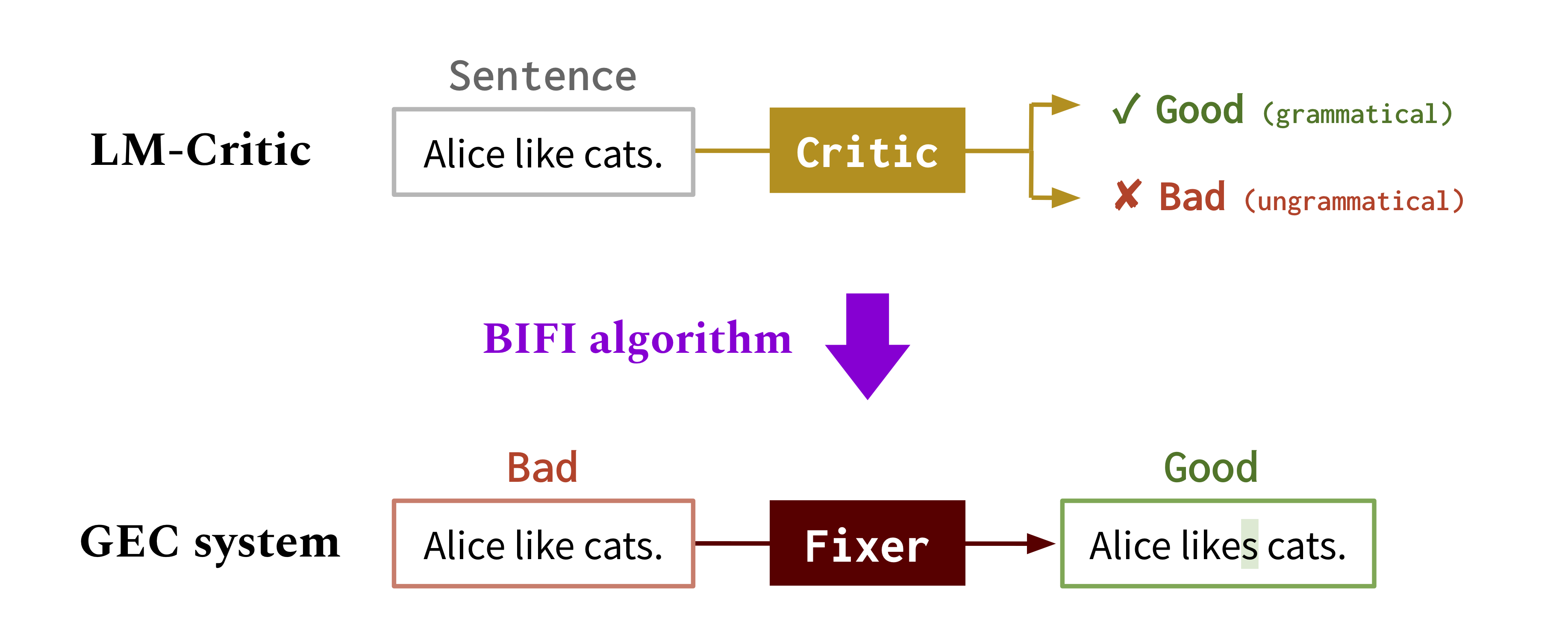

Moreover, to extend BIFI to fixing text errors where there is no perfect critic, we develop LM-Critic, an approximate critic that uses pretrained language models to assess the validity of text input.

BIFI and LM-Critic provide significant performance boost over existing systems on program repair and grammatical error correction benchmarks.

Motivation

Automating the correction of source code (e.g. fixing compiler errors) and text (e.g. fixing typos and grammatical errors) can greatly enhance our productivity of programming and writing. A major challenge in training machine learning models (fixers) for such repair tasks is to obtain parallel data, e.g. labeled broken-fixed code pairs. However, manual labeling is expensive. Existing works create synthetic paired data by applying heuristics to corrupt good code, but it does not generalize to errors in real human-written code or text. How to obtain inexpensive yet realistic training data for repair tasks remains an open research problem.Method: Break-It-Fix-It

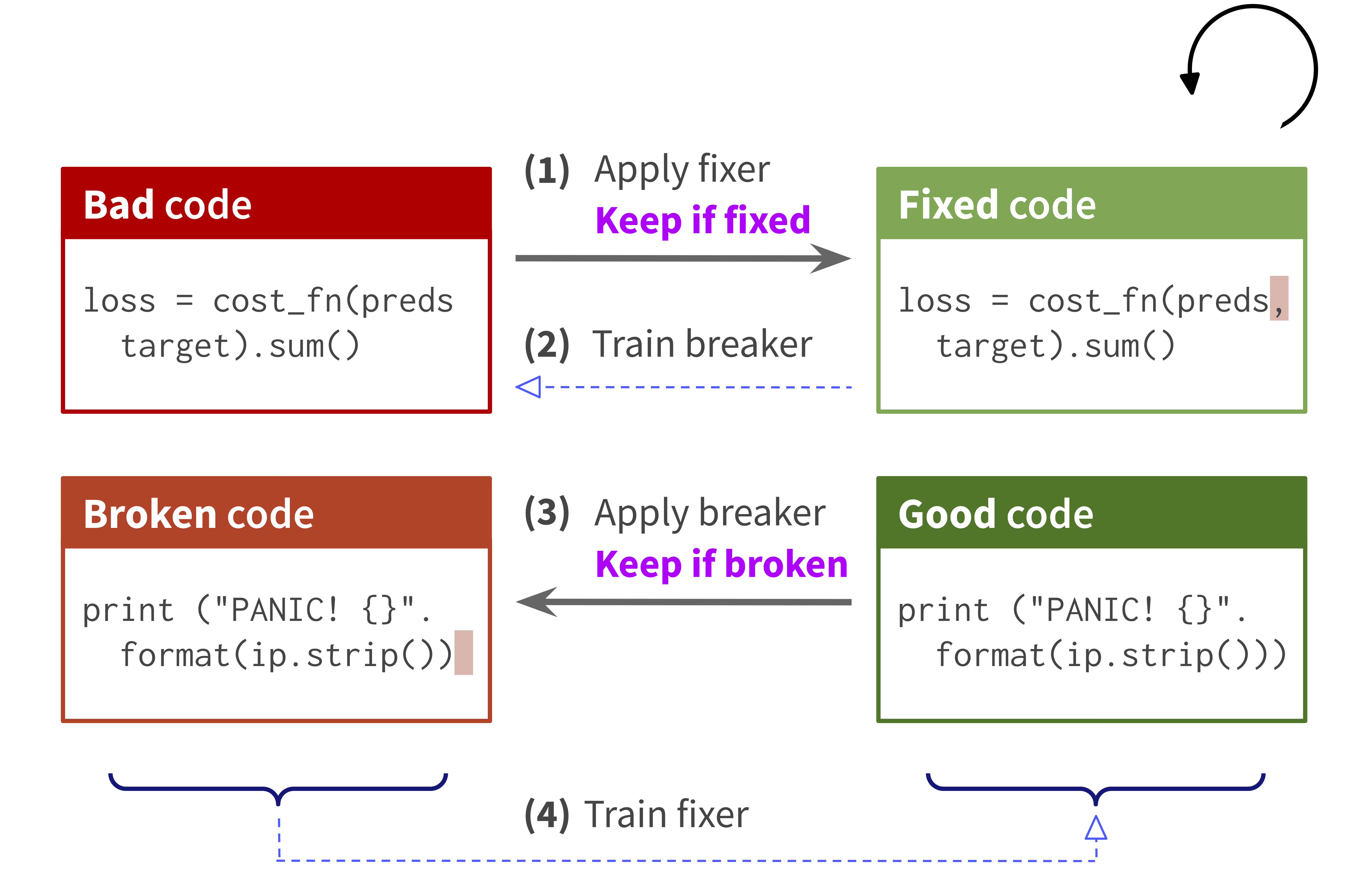

To tackle this challenge, we develop a new approach, Break-It-Fix-It (BIFI), which bootstraps realistic paired data and trains the fixer without manual labeling. The BIFI algorithm takes three inputs:- Critic, which is a tool that assesses the validity of input code, such as a compiler and code analyzer.

- Unlabeled data, which can be raw code snippets on the web such as GitHub. Using the critic, we obtain a set of good code and a set of bad code.

- Initial fixer, which can be the one trained on the naive synthetic data

Advanced method: LM-Critic

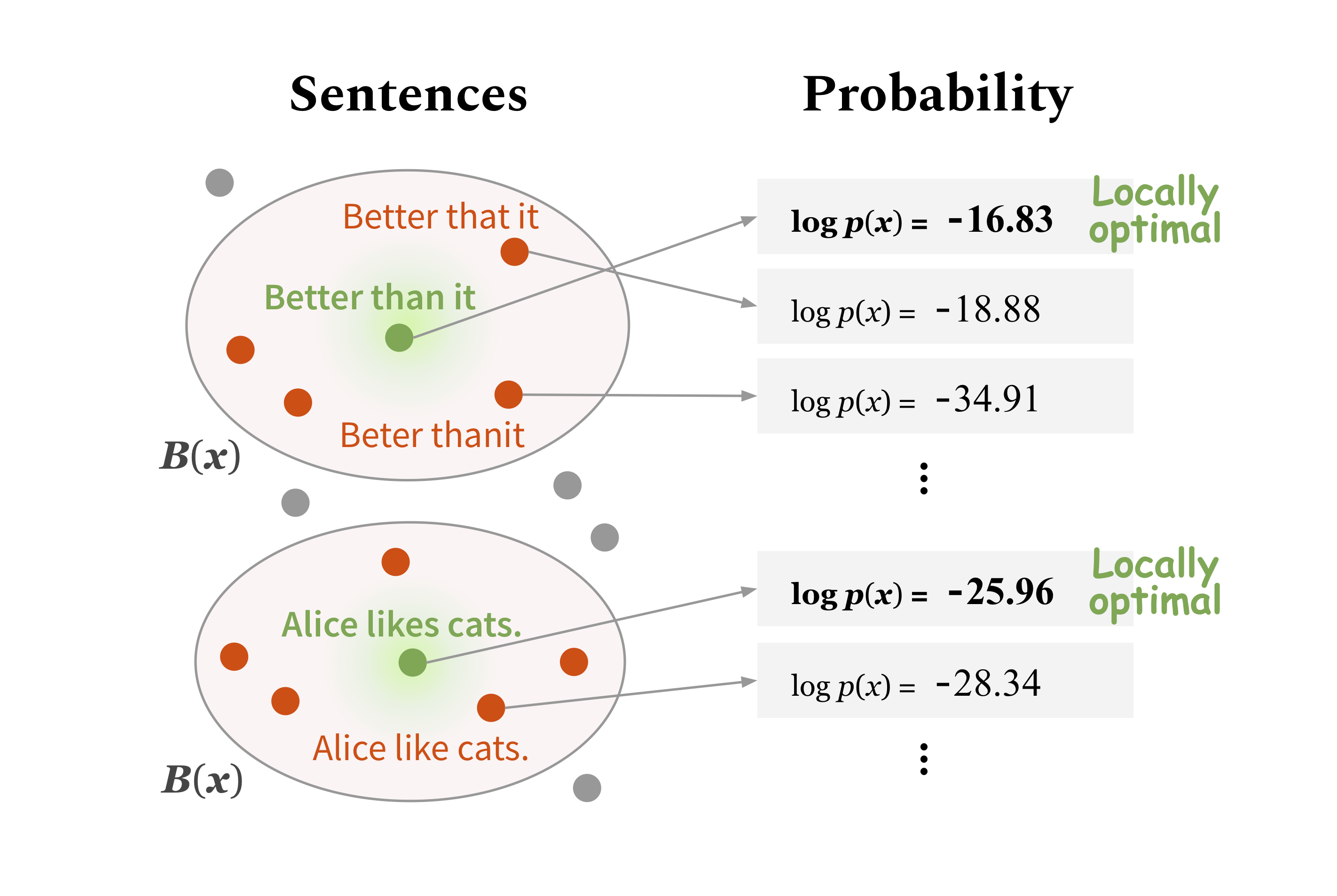

When applying BIFI to fixing source code errors, we can use a perfect critic for code such as compilers. However, in domains other than code such as natural language text, such oracle critic does not exist. To generalize BIFI to fixing text errors (grammatical error correction), we develop LM-Critic, an approximate critic that uses pretrained language models to assess the validity of text input. A language model (LM) learns a probability distribution of sentences from text corpora. The idea of LM-Critic is to deem a sentence as valid if the pretrained LM assigns it a higher probability than its local perturbations.

Code

Our code is available on GitHub:Datasets

The datasets used are included in the code repository.Contributors

The following people contributed to this project:Michihiro Yasunaga

Jure Leskovec

Percy Liang

References

Break-It-Fix-It: Unsupervised Learning for Program Repair. M. Yasunaga, P. Liang. International Conference on Machine Learning (ICML), 2021.LM-Critic: Language Models for Unsupervised Grammatical Error Correction. M. Yasunaga, J. Leskovec, P. Liang. Empirical Methods in Natural Language Processing (EMNLP), 2021.