|

SNAP Library 6.0, Developer Reference

2020-12-09 16:24:20

SNAP, a general purpose, high performance system for analysis and manipulation of large networks

|

|

SNAP Library 6.0, Developer Reference

2020-12-09 16:24:20

SNAP, a general purpose, high performance system for analysis and manipulation of large networks

|

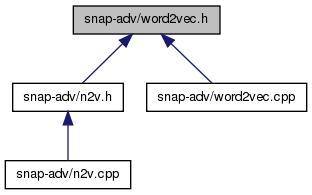

Go to the source code of this file.

Functions | |

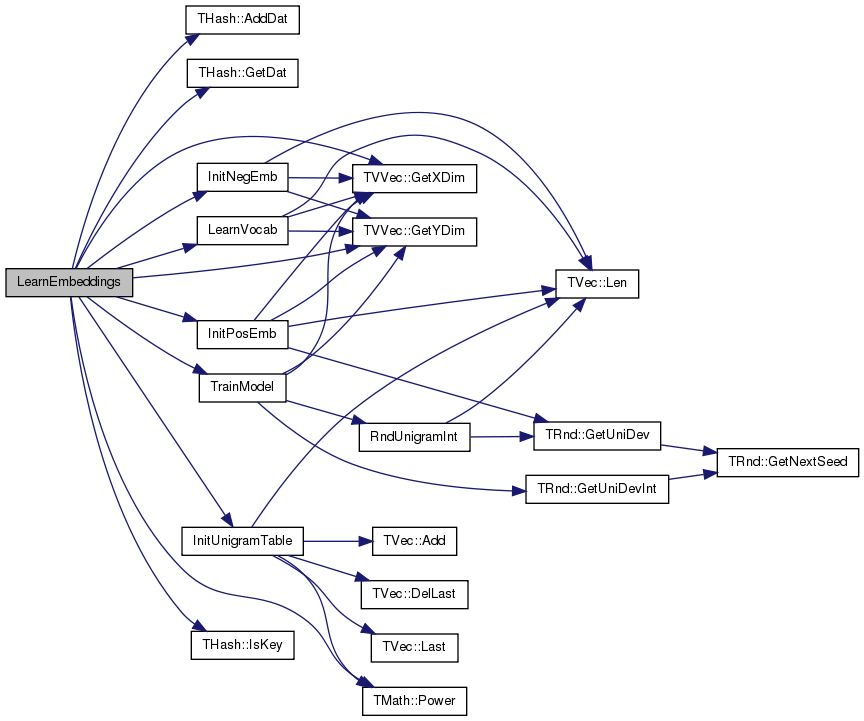

| void | LearnEmbeddings (TVVec< TInt, int64 > &WalksVV, const int &Dimensions, const int &WinSize, const int &Iter, const bool &Verbose, TIntFltVH &EmbeddingsHV) |

| Learns embeddings using SGD, Skip-gram with negative sampling. More... | |

Variables | |

| const int | MaxExp = 6 |

| const int | ExpTablePrecision = 10000 |

| const int | TableSize = MaxExp*ExpTablePrecision*2 |

| const int | NegSamN = 5 |

| const double | StartAlpha = 0.025 |

| void LearnEmbeddings | ( | TVVec< TInt, int64 > & | WalksVV, |

| const int & | Dimensions, | ||

| const int & | WinSize, | ||

| const int & | Iter, | ||

| const bool & | Verbose, | ||

| TIntFltVH & | EmbeddingsHV | ||

| ) |

Learns embeddings using SGD, Skip-gram with negative sampling.

Definition at line 160 of file word2vec.cpp.

References THash< TKey, TDat, THashFunc >::AddDat(), TMath::E, ExpTablePrecision, THash< TKey, TDat, THashFunc >::GetDat(), TVVec< TVal, TSizeTy >::GetXDim(), TVVec< TVal, TSizeTy >::GetYDim(), InitNegEmb(), InitPosEmb(), InitUnigramTable(), THash< TKey, TDat, THashFunc >::IsKey(), LearnVocab(), MaxExp, TMath::Power(), StartAlpha, TableSize, and TrainModel().

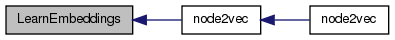

Referenced by node2vec().

| const int ExpTablePrecision = 10000 |

Definition at line 13 of file word2vec.h.

Referenced by LearnEmbeddings(), and TrainModel().

| const int MaxExp = 6 |

Definition at line 10 of file word2vec.h.

Referenced by LearnEmbeddings(), LogSumExp(), TrainModel(), TMAGFitBern::UpdateApxPhiMI(), TMAGFitBern::UpdatePhi(), and TMAGFitBern::UpdatePhiMI().

| const int NegSamN = 5 |

Definition at line 17 of file word2vec.h.

Referenced by TrainModel().

| const double StartAlpha = 0.025 |

Definition at line 20 of file word2vec.h.

Referenced by LearnEmbeddings(), and TrainModel().

| const int TableSize = MaxExp*ExpTablePrecision*2 |

Definition at line 14 of file word2vec.h.

Referenced by LearnEmbeddings(), and TrainModel().