Beta Embeddings for Multi-Hop Logical Reasoning in Knowledge Graphs

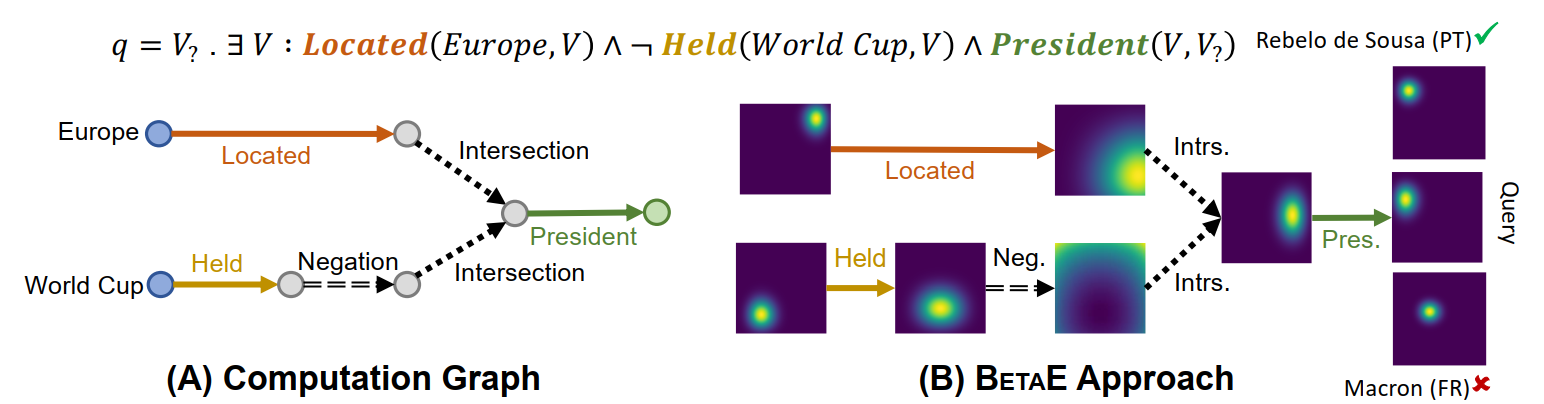

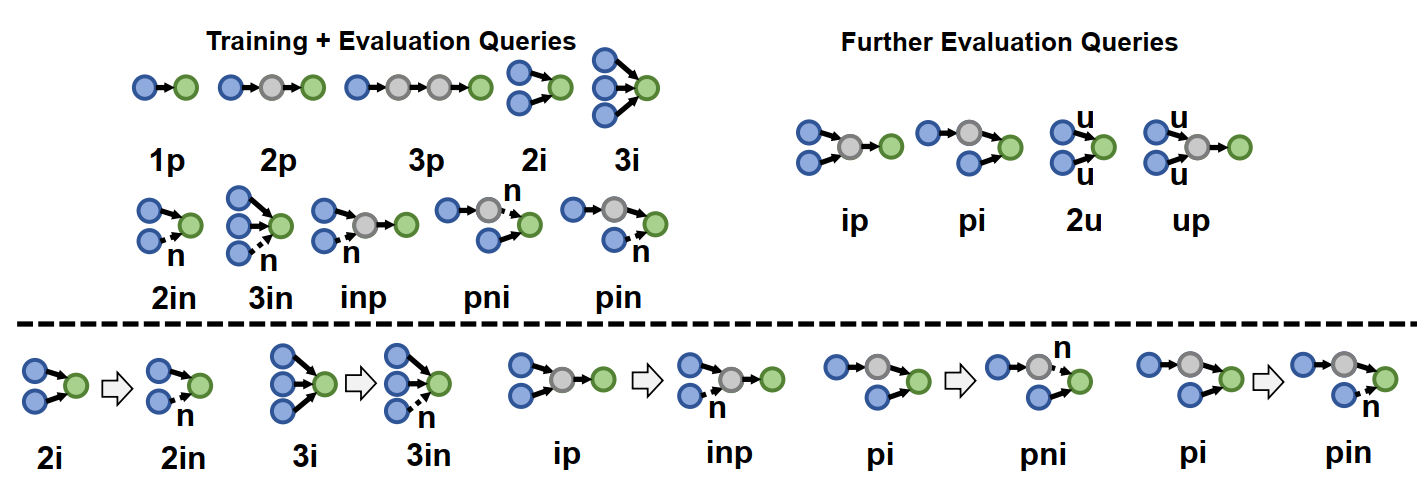

BetaE is a multi-hop knowledge graph reasoning framework. It models queries and entities with probabilistic Beta embeddings using neural logical operators, and provides the first embedding-based framework that can handle any first-order logic query.

Method

Reasoning on incomplete KGs requires answering complex first-order logic (FOL) queries with existential quantification, conjunction, disjunction and negation. In order to reason in a scalable and robust manner, the key is to embed queries and entities so that we can reason in the embedding space, examples include GQE and Query2box. However, both cannot handle negation because the complement of a point/box in the Euclidean space is no longer a point/box.Here we look at a different probabilistic space and aims to embed queries and entities as Beta distributions. The Beta distributions have flexible PDFs, controlled by two shape parameters. And we accordingly design neural logical operators on the Beta embeddings for multi-hop reasoning.

For projection, we use a MLP that takes as input the Beta embedding of a query and a certain relation, and outputs the projected Beta embedding. This represents a mapping from a fuzzy set of entities to another fuzzy set based on a relation.

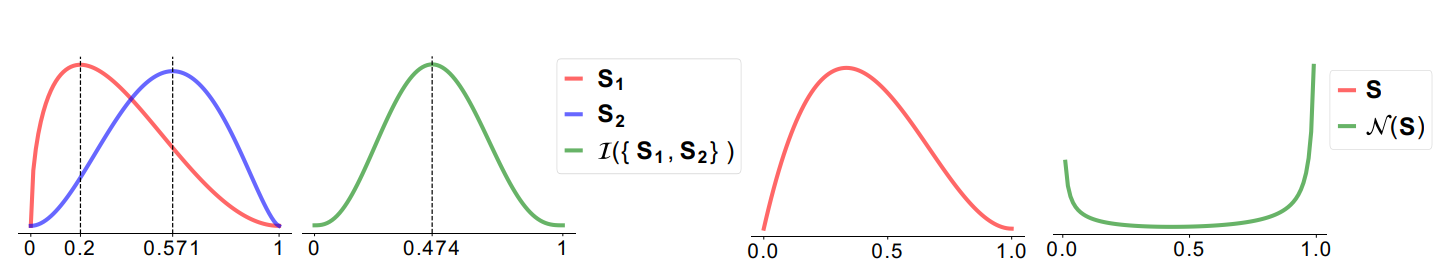

For conjunction, we take weighted product of the PDF of the Beta embeddings of the input queries, it has several benefits and aligns well with the real conjunction operator.

For negation, we directly calculate the reciprocal of the input Beta embedding so that the high density regions become low density and vice versa.

Furthermore, Beta embeddings also give us a natural way to handle the uncertainty of a given query. The entropy of the learned Beta embedding correlates well with the number of answers a query has.

Please refer to our paper for detailed explanations and more results.Code

A reference implementation of BetaE in Python is available on GitHub.Datasets

The datasets used by BetaE are included in the code repository.Contributors

The following people contributed to BetaE:Hongyu Ren

Jure Leskovec